This post is my follow up on the article Large Database Queries on Android – Windows of Opportunity published by Chris Craik who is a developer at Google working on Android UI rendering and performance.

In his article, Chris reviews several unfortunate design decisions in implementation of SQLiteCursor in Android and states that:

Queries that fit within a single CursorWindow avoid all of the problems, which is why we favor them so strongly in Paging and RoomChris Craik, Large Database Queries on Android – Windows of Opportunity

In addition, Chris seem to suggest that 10-20 is the optimal amount of items to query when performing “paging” of large datasets:

It’s common to configure a page size of just ten to twenty items, and to query only that many items at onceChris Craik, Large Database Queries on Android – Windows of Opportunity

While I agree that performing paged queries on background thread is the right design choice, I did not find justification for the above specific (and far reaching) claims in the article itself.

In fact, some time ago I experimented with RecyclerView in order to understand how many items should be fetched from a web server in a single page in order to achieve the best user experience. The numbers I arrived at back then and the factors that had to be taken into consideration are very different from what Chris presents in his article.

Since lists are very common in applications, and since the new Paging Library will (hopefully) make it easier to implement paging in lists – the choice of the page size becomes a more common user experience and performance related decision.

Let’s dig into it together.

SQLite benchmark:

In his article Chris explains how SQLiteCursor uses CursorWindow, which is typically around 2MB in size, under the hood. He provides a very good explanation of several inefficiencies related to the integration between the two, but he doesn’t provide any data that shows the real impact of these inefficiencies.

I believe that any performance related discussion without quantitative data is meaningless at best, therefore I wrote a basic benchmark application that tests SQLite performance on Android devices (source code available here).

Test strategy is simple:

- Preload SQLite database with 200 entries of size 100kB each (20MB in total).

- Perform multiple serial test executions (steps).

- In each step execute multiple queries of fixed size for a total of 200 items and convert the data into POJOs

- In each step measure the total execution time of #3 above

The entire test executed serially on a single background thread.

Test steps are 1, 5, 10, 20, 40, 50, 100 and 200 items per query, which amounts to 200, 40, 20, 10, 5, 4, 2, and 1 queries per test step respectively.

Results for several devices that I had access to:

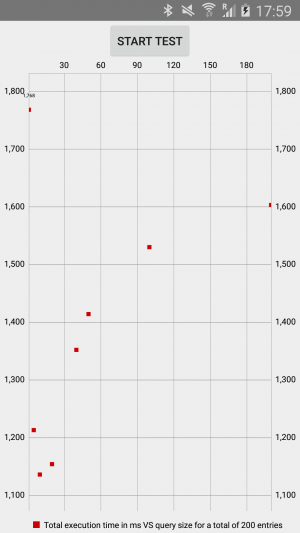

Samsung Galaxy S4:

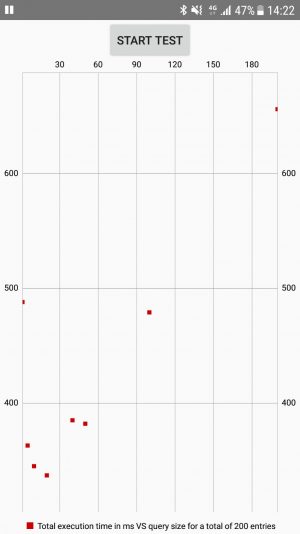

Samsung Galaxy S7:

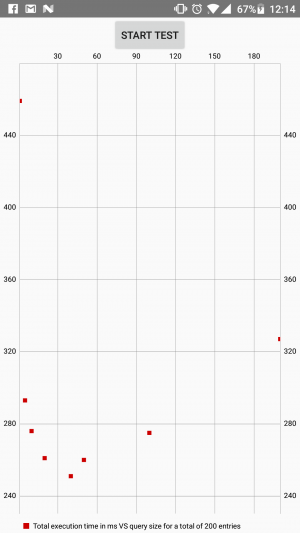

OnePlus 2:

While the exact numbers vary greatly between devices and can even vary between test executions on a single device, there are patterns that show up on all charts:

- Total execution time is highest for steps that perform queries of 1 and 200 items

- Total execution time has a minimum in the interval [20,50] items per query

- Excluding 1 item per query step, the factor between the “slowest” step and the “fastest” step is ~2x

Since the source code for the benchmark is open, I encourage you to install it on your device and share the results in the comments.

If you think that the benchmark is not accurate, or want to expand it with more test cases – feel free to open a pull request.

Implication of benchmark results for the choice of page size:

In his article, Chris says that the typical size of Cursor window is 2MB. Let’s assume that this is indeed the case for all the devices listed above.

Under this assumption, what are the implications of the results for the choice of the page size?

In my opinion, the only valid conclusion from the above results is that effect of CursorWindow’s size on the performance of SQLite queries can and should be ignored. Allow me to explain.

The benchmark measures the total time for a pull of 20MB of data from the database. This is a huge amount of data that will rarely ever be required at a single instant on mobile devices. Even if we do need to pull such amount of data from SQLite, the only correct way to accomplish this would be to query the database on a background thread.

Even on the slowest tested device (Samsung Galaxy 4), the difference between “fastest” pull and the “slowest” pull is about 0.5 seconds.

Now, ask yourself this question: in a pull of 20MB of data from SQLite on a background thread, does 0.5 seconds overhead matter? The answer is no – whether it is 1 second or 1.5 seconds, from user experience perspective it is the same as long as this activity does not cause the UI to be unresponsive.

In practice, the usual amounts of pulled data will be much lower. Notice that in the interval of [10,50] items per query, which amounts to 1-3 CursorWindow capacities, the differences in pull times are smaller. So, whether your query fits in a single CursorWindow or in three – investing any time into optimization will be a total waste.

Sure, if the schema and the queries are not optimized (e.g. selection on a non-indexed column) then the difference will probably be more substantial. However, in this case, I think it is especially important that you don’t try to optimize SQLite performance by tuning the page size, but solve the actual problem instead.

The last reason why you shouldn’t take CursorWindow’s size into consideration when choosing the page size is that rarely ever all the data for the list will be available locally. In most cases, as the user scrolls or flings through the list, the data will be fetched from a web server.

Network access times are so much longer than SQLite access times, that you’ll end up optimizing for web access times anyway, in which case querying SQLite for even 20MB will be totally reasonable.

Paging tactics:

After we concluded that a choice of page size is independent of SQLite performance, let me share with you my thoughts about the factors that do need to be considered. Keep in mind that this is probably not a universal solution, but it might be a good starting point in any case.

The goal of paging in lists is optimizing user experience while keeping the performance reasonable. Exactly in this order – user experience first, then performance.

The best user experience is when the list appears to be infinite and entirely in-memory – the user can scroll and fling the list in any direction, without pauses for data loading.

Single page:

The simplest way to achieve best user experience is to actually load the entire list into memory at once. This is equivalent to saying that the page size equals to the size of the dataset. However, this approach has two drawbacks for large lists: potentially long initial loading time and high memory consumption.

Surprisingly, there are many circumstances in which simply loading the entire contents of the list into memory will work.

Use this approach when the business domain of your application imposes a reasonable upper limit on the size of list’s data. However, you must be sure that this limit is actually imposed by the business domain because, otherwise, as the amount of data in the system grows, your users will start experiencing problems.

Paging for “all data in memory” experience:

If loading the contents of the entire list into the memory is not viable, then paging should be implemented. This means that the data should be loaded into the list in background, while the user is interacting with the application.

When using paging, your goal is to keep, at any instant, enough data in memory such that the user will have the aforementioned “all data in memory” experience. That will amount to the minimum of two “flings worth of items” in any direction from the current user’s position in the list. Let’s understand where this number comes from.

First of all, one fling worth of items is the maximal number of items that the user can skip by flinging his finger over the screen. This number is not difficult to obtain experimentally, and can also be estimated if a flinging experiment can’t be performed (e.g. logic is not ready yet).

In order to estimate one fling worth of items, count the number of items that fit on one screen (you do have design specifications, right?) and multiply it by 10.

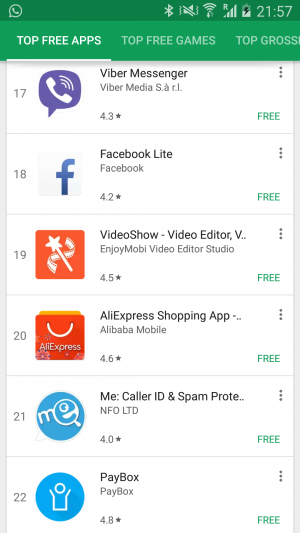

For example, Google Play on my phone:

There are 6 items on each screen, therefore one flinging distance can be estimated as 60 items. The actual number is around 80 items, so the 10x estimation factor is not very accurate, but it is simple to remember and use.

So, why should we aim for having at least two flings worth of items in any scroll direction supported by the list?

Because when the user flings the list, the application might be not able to fetch additional items until the fling completes. This means that one fling worth of items will be scrolled from memory, and you want to have some more items in case the user wants to keep scrolling or flinging during the fetch of the next page.

In practice, however, some pages will usually be fetched from the web, which can take some time. And even if all the data comes from local SQLite, database locking schemes can slow the queries substantially. Therefore, while two flings worth of items is the absolute minimum the application should have in memory at any instant, I would advice to go for at least three or four flings worth of items in order to have a buffer in favor of user experience.

So, given that we decided that our goal is to have X flings worth of items in memory at any instant, how do we choose the page size? Simple – set the page size to be exactly X flings worth of items.

In the above example of Google Play on my device, if I would want to have X = 3, that would amount to a page size of approximately 240 items.

Additional paging considerations:

While the above discussion of page size should provide you a good starting point, there are more considerations that should be taken into account.

In discussing Google Play page size we said that we might want to set it to 240 in order to achieve the best user experience. Imagine now that each item is represented by a bundle of data 1kB in size. One page would amount to approximately 240kB of data then.

Is this a problem? It depends.

In most circumstances I would say that 240kB of data is a reasonable amount of data to pull from the web server for a single page. On 3G and higher networks, download speeds are 500 kB/s and above which means that it will take less than half a second to fetch one page of items.

However, in some places in the world, or in special circumstances (e.g. reach of the limit of data plan), the speed of 3G can be as low as 100 kB/s and even lower. Fetch time for a single page skyrockets to more than 2 seconds in these cases, which can greatly degrade user experience.

There are even places and circumstances in which 2G network can be used, however I don’t think that optimizing for 2G is a good strategy today.

In addition, when using internet abroad, roaming prices can be very high and fetching of 240kB of data can cost more than a dollar!

Due to above limitations, it is often not reasonable to create a true “all list items in memory” experience and some tradeoffs need to be made. Google Play, for example, has a page size of 20 items, which is very far from the page size of 240 items that is required for a true “all list items in memory” experience.

Conclusion:

In this article we discussed paging of data that is being displayed in lists.

We started by showing that SQLite performance is irrelevant to the choice of page size, and then listed several factors that need to be taken into consideration. We also discussed various tradeoffs that might need to be made in order to account for either slow or expensive network data access.

We also saw how a discussion of performance and optimization without accurate and reproducible quantitative data is meaningless at best and might very well be misleading.

It took me just several hours to write the benchmark application (much less than it took me to write this post) and other people performed experiments and published results too. Therefore, there is really no excuse for not showing quantitative data when discussing performance and optimizations – it is simply unprofessional.

Please leave your comments and questions below, and consider subscribing for our newsletter if you liked this post.

Came here from you link in comment of that article. Very interesting findings about optimal item count per page.

Having read the article about inefficiency of SQLiteCursor, I was shocked and confused at the same time. Your article made me even more confused. 🙂

What Chris wanted to say is that SQLiteCursor works ineffectively in the following case:

1. User opens a long list, which is loaded lazily through paging

2. User scrolls to somewhere in the middle of the list

3. User rotates the screen (or leave the app and comes back)

4. State is restored and user wants to scroll forward.

As far as I understand, the point here is to make RecyclerView re-request the data using cursor and the moveToPosition(). According to Chris and provided evidence (source code that lies under the hood of cursor), several initial queries and unnecessary data loads will happen (that could have been avoided).

The solution he provides is: instead of requesting all the data and accessing it via cursor, it’s more efficient to make a small queries (as if loading the data from web). This helps to avoid unnecessary reads from the database.

Do you disagree with this? I am just trying to draw conclusion from all of this)

And also another question: when loading data from the server, do you think it’s reasonable to have different page size based on the device (screen size) and / or current network speed for better user experience? In my not so long experience in Android, I’ve seen either fixed pages size or no page size at all (it is defined by the server).

Hello Anton,

Thanks for your questions.

The case you described is only one of the many cases in which SQLiteCursor inefficiencies will come into play. Even if there is no config change involved and you just read all entries from SQLiteCursor sequentially, some of the inefficiencies that Chris described will come into play once the total amount of data read is more than a single CursorWindow.

In fact, you can easily see this using the provided benchmark app. If you install and run it, then in the logcat you’ll able to see the logs of the test alongside the logs of the OS that will tell you when SQLiteCursor window transitions occur (make sure to set log level to “verbose”).

Chris does suggests that small queries on background thread are better, which sometimes indeed the case, but I think that all the discussion of SQLite efficiency is not very relevant in this context.

You want to perform queries on background thread in order to keep UI responsive, and you want these queries to be small in order to achieve best possible user experience under constraints of performance, network speed and cost. You don’t really need to waste mental resources on SQLite performance because it’s effect is negligible in most cases (unless your application routinely queries SQLite for tens of MB of data, in which case its performance might become a factor).

As for whether the page size can be variable – the answer is yes. I didn’t want to discuss this point in the article because it applies to very few cases.

For example, if you work on Facebook or Twitter app, then these are your considerations:

1. The list is the most important component of the application (the feed)

2. Users routinely scroll the list a lot

3. There are hundreds of millions of users

4. There is no lack of software engineers

In this situation, making arbitrarily complex manipulations and performance optimizations of the list is worthwhile.

Advanced optimizations can involve variable page size as a function of device size, network speed, page number (e.g. smaller first page in order to decrease the “list startup” time), user usage patterns, etc.

However, in most cases advanced optimizations like the above ones would be an overkill.

In any case, you should have a very clear picture of what you are trying to optimize and why. This process involves gathering initial metrics, identifying issues, verifying that the issues are real, implementing optimizations and then gathering the same metrics again in order to observe the difference.